GML Express: large-scale challenge, top papers in AI, and implicit planners.

“Why do old men wake so early? Is it to have one longer day?” Ernest Hemingway

Welcome to the Graph ML newsletter!

For some time I was struggling with the format of this newsletter: whether to write about news and blog posts or about my recent insights in some specific topics. So I decided to separate between these formats and call all my emails that gather recent news in the field as GML Express and my emails that dive into very specific topics as GML In-Depth. Today, I will do my first issue of GML Express, which will cover announcements, videos, courses and tutorials, and blog posts.

Announcements

Graph machine learning challenges at KDD Cup 2021

There are two graph-related challenges at KDD Cup this year: OGB-LSC and City Brain.

The first one asks you to build graph models for link prediction, graph regression, and node classification tasks on graphs of unprecedented scale (the largest has ~250M nodes). Dates: 15th March - June 8th. The winners will be honored at the KDD 2021 opening ceremony.

The second challenge asks you to build a model to predict and optimize traffic at a city-scale road network — the task where GNNs work well. Dates: 1st April - 1st July. The top-10 winners will take home 10K USD.

Release of PyG-Temporal

PyG-Temporal is an extension of a popular PyTorch library PyG for temporal graphs. It now includes more than 10 GNN models and several datasets. With world being dynamic I see more and more applications when standard GNN wouldn't work and one needs to resort to dynamic GNNs.

Blog posts

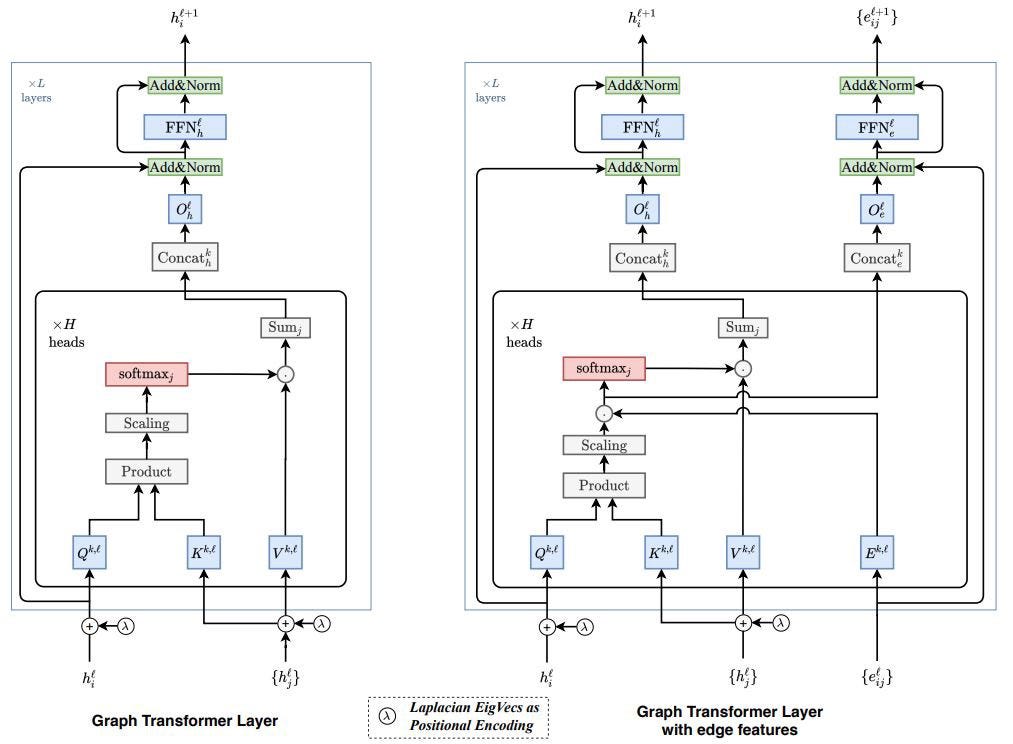

Graph Transformer: A Generalization of Transformers to Graphs

A blog post by Vijay Prakash Dwivedi about their paper with Xavier Bresson at 2021 AAAI Workshop. It is a generalization of GAT network with batch norm and positional encodings, which aggregates via local neighborhoods. After studying heterophily I expect to see more works that go beyond local neighborhoods, for example by learning the nodes to attend to across the whole graph. One recent instance of such architecture is a paper Language-Agnostic Representation Learning of Source Code from Structure and Context ICLR 2021 that combines structure of AST graph with the context of each word for attention model in the code summarization task.

Top-10 Research Papers in AI

My recent blog post about the top-10 most cited papers in AI for the last 5 years. I looked at major AI conferences (ICML, NeurIPS, ICLR, etc.) and journals (Nature) (excluding CV and NLP conferences). One of the most cited papers is the seminal work by Kipf and Welling introducing Graph Convolutional Network (GCN) that spawned a lot of research in graph ML. It was quite a refreshing experience to realize that many of what we use today by default (Adam, Batch Norm, adversarial attacks, etc.) have been discovered only within the last few years.

Graph Neural Networks by Jonathan Hui

Two blog posts about GNNs by a popular ML writer Jonathan Hui (known for his RL, CV, and DL blog posts). In the first one he describes the basics of GNNs (MP, pooling, etc) as well as some popular models (ChebNet, MoNet, GAT, etc.). The second one covers recurrent GNNs, graph auto-encoders, spatio-temporal models, and applications.

The Easiest Unsolved Problem in Graph Theory

This is our blog post about reconstruction conjecture, a well-known graph theory problem with 80 years of results but no final proof yet. It’s one of the grand challenges of graph theory and it has seen some progress recently, so hopefully it will be resolved in my lifetime. In the meantime, we considered graph families for which reconstruction conjecture is known to be true and tried to come up with the easiest family of graphs that is still not resolved and have very few vertices. The resulted family is a type of bidegreed graphs (close to regular) on 20 vertices, which is probably possible to verify by brute-force computer computations (though it would take a year or so).

Learning materials

Deep Learning and Combinatorial Optimization IPAM Workshop

This is a great workshop on the intersection of ML, RL, GNNs, and combinatorial optimization. This is a very hot topic of how you can solve hard combinatorial problems with machine learning tools and facilitate existing off-the-shelf solvers. Presentations are by the top experts in this field and discuss applications of ML to chip design, TSP, physics, integer programming, among others.

A Complete Beginner's Guide to G-Invariant Neural Networks

A tutorial by S. Chandra Mouli and Bruno Ribeiro about G-invariant neural networks, eigenvectors, invariant subspaces, transformation groups, Reynolds operator, and more. Soon, there should be more tutorials on the topic of invariance and linear algebra.

CS224W: Machine Learning with Graphs 2021

CS224W is one of the most popular graph courses by Jure Leskovec at Stanford. This year includes extra topics such as label propagation, scalability of GNNs, and graph nets for science and biology.

Paper Notes in Notion

Vitaly Kurin discovered a great format to track notes for the papers he reads. These are short and clean digestions of papers in the intersection of GNNs and RL and I would definitely recommend to look it up if you are studying the same papers.

Videos

Theoretical Foundations of Graph Neural Networks

Video presentation by Petar Veličković who covers design, history, and applications of GNNs. A lot of interesting concepts such as permutation invariance and equivariance discussed. Slides can be found here.

A Tale of Three Implicit Planners and the XLVIN agent

Another presentation by Petar Veličković about implicit planners, which could be seen as a middle-ground between model-based and model-free approaches for RL planning problems. The talk covers three popular implicit planners: VIN, ATreeC and XLVIN. All three focus on the recently popularised idea of algorithmically aligning to a planning algorithm, but with different realisations.

Video and slides of GNN User Group meeting

The first meeting of GNN user group talks about the usage and next release of DGL and featuring Le Song with combinatorial optimization talk. On the second meeting there is a discussion of new release of DGL, graph analytics on GPU, as well as new approaches for training GNNs, including those on disassortative graphs. Slides can be found in their slack channel.

Geometric deep learning, from Euclid to drug design

Michael Bronstein talks about the history of geometry, how it is now applied within deep learning for various applications for drug design, recepie creation, and image reconstruction.

That’s all for today. If you like the post, make sure to subscribe to my twitter and graph ML channel. If you want to support these newsletters there is a way to do this. See you next time.

Sergey